$ wget -r -np -k -random-wait -e robots=off -user-agent "Mozilla/5.0" 'target-url-here'Īnd if third-party content is to be included in the download, -H switch can be used alongside -r to recurse to linked hosts. Wget also provides options for bypassing download-prevention mechanisms.

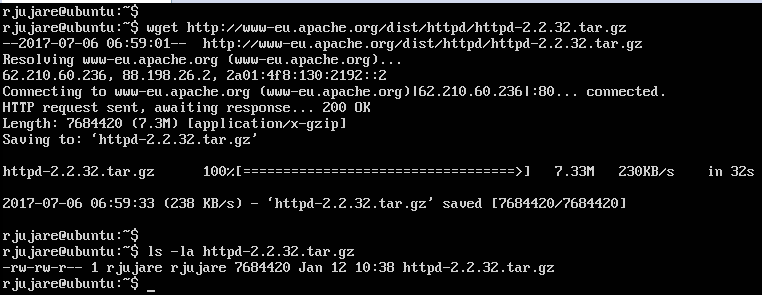

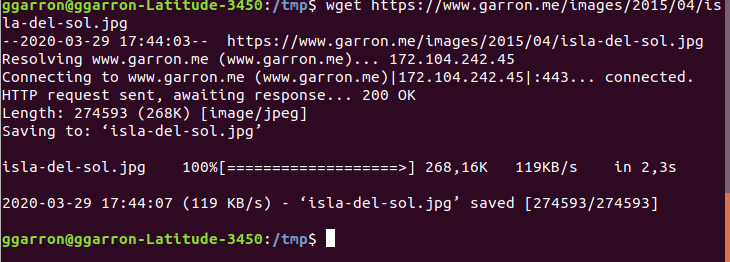

$ wget -r -np -p -E -k -K 'target-url-here' In case of a dynamic website, some additional options for conversion into static HTML are available. Wget can archive a complete website whilst preserving the correct link destinations by changing absolute links to relative links. Needless to say, just from the simplest usage, you can probably see a few ways of utilising this for some automated downloading if that's what you want. When you already know the URL of a file to download, this can be much faster than the usual routine downloading it on your browser and moving it to the correct directory manually. One of the most basic and common use cases for Wget is to download a file from the internet. This section explains some of the use case scenarios for Wget. Make sure that only root can read this file with chmod 600 /etc/nf. If it is already installed, you will get output similar to the example below. To check if the wget package exists, you can run the following command. Warning: Be aware that storing passwords in plain text is not safe. Most Linux distributions will have wget preinstalled as a default package. XferCommand = /usr/bin/wget -proxy-user "domain\user" -proxy-password="password" -passive-ftp -q -show-progress -c -O %o %u To have pacman automatically use Wget and a proxy with authentication, place the Wget command into /etc/nf, in the section: Proxies that use HTML authentication forms are not covered. $ wget -proxy-user "DOMAIN\USER" -proxy-password "PASSWORD" URL Wget uses the standard proxy environment variables. easily used by languages than can substitute string variables.In this case, Wget transfered a 3.3 GiB file at 74.4MB/second rate. FTP is not secure, but when transfering large amounts of data inside a firewall protected environment on CPU-bound systems, using FTP can prove beneficial.

However, FTP is lighter on resources compared to scp and rsyncing over SSH. Normally, SSH is used to securely transfer files among a network. See wget(1) § OPTIONS for more intricate options. Not only is the default configuration file well documented altering it is seldom necessary. There is an alternative to wget: mwget AUR, which is a multi-threaded download application that can significantly improve download speed.Ĭonfiguration is performed in /etc/wgetrc. The git version is present in the AUR by the name wget-git AUR. It is a non-interactive commandline tool, so it may easily be called from scripts. html extension, then add the "-html-extension" option.GNU Wget is a free software package for retrieving files using HTTP, HTTPS, FTP and FTPS (FTPS since version 1.18).

When you want to change the links on the pages automatically to point to the downloaded files then use this command instead: wget -r -convert-links -no-parent This will download the pages without altering their HTML source code. Then run the following command to download the website recursively: wget -r -no-parent Open a Terminal window (or a Shell on Linux) and go to the directory where you want to store the downloaded website. OpenSuSE yast install wget Download a Website with wget The wget command is available in the base repositories of all major Linux distributions and can be installed with the package manager of the OS. The installer for the Windows version can be found here: Install wget on Windowsįirst, download and install wget for Windows on your computer. I will use the tool wget here, which's a command-line program that is available for Windows, Linux, and MAC. This tutorial will show you which application can be used on Windows and Linux. Sometimes you might want to download an entire website e.g.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed